One block from the front door of the building I have lived in for a quarter of a century is a little trapezoid of urban delight called Berczy Park. It is best known for its whimsical fountain with statues of dogs all shooting water up towards a bone on the top, surrounded by a plaza with ample benches and tables and chairs.

At the west end are two large metal hands, originally made to be connected with a cable cat’s cradle, but I haven’t seen the cables in place for some years.

The hands are flanked by mounds of earth, excellent for sitting and reading or sunning or, after dark in the warm months, even setting up a screen and watching a movie.

At the east end, just behind the trompe-l’œil back wall of the Gooderham “Flatiron” Building, is a gravelled area for real live dogs to do those things their owners take them outside to do.

This park is very popular, and has been for as long as it’s been in this design, which is just under ten years. The design was done by Claude Cormier, who was also responsible for some other favourite parks in Toronto. I have photos from exactly a decade ago of it in the middle of being completely redone from its previous design.

The obvious question, of course, is “Who or what is or was Berczy?”

Well, obvious to me and probably to you. Most people don’t seem to pause long to consider it, nor do they seem to wonder how to say it. Universally people around here pronounce it like “brr-zee” (/ˈbɹ̩.zi/). Which is unsurprising, given that the modal language hereabouts is English.

But that’s plainly not an English name.

I assumed for quite a few years that it was a Polish name, because cz is characteristically Polish (and not, by the way, Czech – in fact, Czech for “Czech” is čeština). In that case, it would be pronounced /ˈber.ʈʂɨ/. But you need not learn how to say that, because it is not, in fact, a Polish name.

It is not, really, an anything name. It’s as authentic as Häagen-Dazs (which, in case you don’t know, was invented by Reuben Mattus in the Bronx with the intent of looking Danish, which it doesn’t quite really). But it was the name used – legally, and passed on to his sons – by William Berczy, artist, art dealer, settler, adventurer, now viewed as a co-founder of Toronto. Berczy also oversaw the creation of a stretch of Yonge Street (the central north-south street of Toronto, long vaunted – somewhat fraudulently – as the longest street in the world), and founded the town now known as Markham, which Torontonians will know as an important part of the “905” northern suburbs. Berczy himself lived closer to the waterfront in Toronto. He subsequently lived in Montreal, where he designed an Anglican cathedral, and he is buried at Trinity Church, Wall Street, in New York City, where he died while on a business trip.

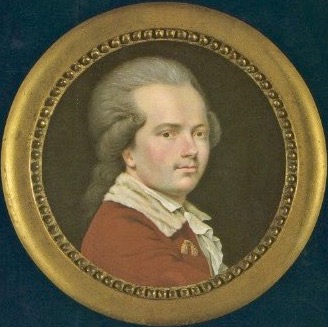

Self-portrait of William Berczy, National Gallery of Canada, via Wikimedia

But, as I said, William Berczy was not originally William Berczy, and he also was not Polish – although he spent some of his young adulthood in Poland, on some risky quasi-diplomatic missions. So (1) Who was he before he was William Berczy and (2) Where did this name Berczy come from?

The first question is easy. He was born Johann Albrecht Ulrich von Moll – a much more businesslike German name. He was born in Bavaria; his family moved in his childhood to Vienna, where his father was a politician and diplomat. There is an enjoyable novelization of his life by John Steffler titled German Mills (they truly could have come up with a much better title). It covers, with rich detail and as much fiction as is necessary to mortar the bricks of the story, his life from his adventuresome youth in war-torn Central Europe, through his years as a painter in Italy and Switzerland and his marriage to Swiss artist Charlotte Allamand, and on to his life as an art dealer in London and then as the leader of a group of German settlers first in New York State and then in Upper Canada, and then – after reversals by people in power led to the settlers getting much less than initially promised – returning to his work as a portrait artist, at which he made a good name for himself.

Berczy’s portrait of Joseph Brant hanging in the Art Gallery of Ontario

So: after business and some liminal years, art. It was quite an adventure; he was man ever on the move, ever doggedly climbing, ever remaking himself.

Steffler’s book does not, however, answer question number 2: the origin of the name Berczy. It adverts to Moll’s having taken on the name and used it increasingly, and having used it officially at his marriage and onward, but not once does it address the question of where it came from.

I have, on loan from the Toronto Public Library, a book published in 1991 by the National Gallery of Canada, titled Berczy. The larger part of the book is a catalogue of William Berczy’s paintings and drawings, but it has several essays. And in the essay “The European Years, 1744–1791,” by Beate Stock, I find, on page 28, the following paragraph:

How Albrecht Moll arrived at the surname Berczy is a matter of some speculation. It may have held romantic associations for him, as the name Bérczy in Hungarian is a poetic (somewhat aristocratic) way of saying “from the mountains” (bérc is literally “crag” or “peak”). Was the name chosen as a reminder of his adventures among the Hungarians? Or did it perhaps derive from his youthful nickname “Bertie,” altered to “Berczy” by his Hungarian acquaintances? Why he took another name, according to Berczy, was in order to relinquish any further claim on his patrimony, and thus to safeguard it for his mother’s use.

(It should be noted that his father died when Berczy was in his twenties, leaving the family in financial straits.) To add to that, in the Dictionary of Canadian Biography entry on him by Ronald J. Stagg, there is this tantalizing passage:

If one of his writings is taken at face value, while on a diplomatic mission to Poland in the 1760s he had to hide in a Turkish harem and was captured by a Hungarian bandit. It may have been at this time that his nickname, Bertie, became Bertzie (in Hungarian, Berczy).

This doesn’t really clear things up perfectly. It does seem to indicate that the resemblance to Bercy, a neighbourhood of Paris that has its own Parc de Bercy (near a stadium and train station, like Berczy Park is), is coincidental. But in modern Hungarian, Berczy is not a possible spelling: y is used only in specific digraphs (ly, ny, ty), and cz is not a possible combination – it could be cs or just c, or sz or zs or just z, each with its own pronunciation, but cz is not available.

But the tidiness and consistency of Hungarian spelling is immediate evidence of a fairly recent spelling reform – which, indeed, took place in the 1800s, well after William Berczy got his name. At Berczy’s time, Hungarians would have spelled the sound /ts/ – now spelled as just c – one of two ways: tz or cz (a spelling difference that, I learn from an article by Johanna Laakso, divided between Protestants and Catholics). And the letter y also had different use at the time and could be said /i/. So there we have it.

But, you know, he may have been /ˈber.tsi/ (“bear tsee”) to himself and his family, but his coffin in the Trinity Wall Street graveyard was marked “Berksey,” so plainly some people pronounced it differently. And his younger son, Charles Albert Berczy, first postmaster of Toronto, likely went with however the people around him chose to say his name. And now the park is solidly “brr zee,” as I have said, and that’s unlikely to change.

Unlike the park. You may recall that I said the park was redone about a decade ago. Before that, it had much more interiority to it for such a small park: it had a fountain (less fancy but nice enough) in the middle, surrounded by benches, but at that time that little round plaza was surrounded by berms of earth and rows of trees.

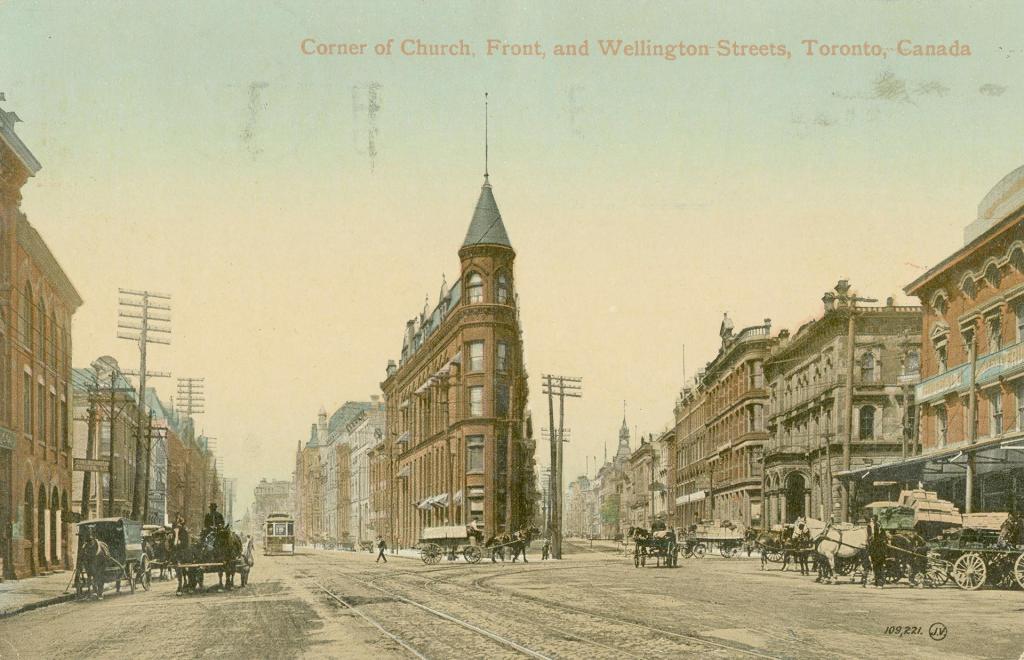

But that version of the park was itself a reinvention of what was there before. That block, tipped by the Gooderham Building, was a block full of office and business buildings by the start of the 20th century.

Postcard, approximately 1910, of the Gooderham Building viewed from the east; the future Berczy Park is the row of buildings behind it. From the Toronto Public Library’s Digital Archive

Scott Street, looking north from south of Front Street, 1955; the west end of Berczy Park, where the hands now are, is in this photo occupied by the white building front left. From the Toronto Public Library’s Digital Archive

In the 1960s, most of the buildings on that block (except the Gooderham) were demolished for a planned arts centre. But government reversals led to the arts centre being much less than initially promised – it became the St. Lawrence Centre, a building that occupies a half block of the south side of Front Street, across from Berczy Park. And the unused land became the classic Toronto placeholder: a parking lot. A parking lot in downtown Toronto is always a once and future building, a minimum-liability placeholder, somewhere something is going to be put. Only in this case, instead of another building, in 1980 they put a park there and named it after William Berczy. So: after business and some liminal years, art.

And after that, more art.

And now the park is one of the most European-feeling places in Toronto. An artificial European vibe, perhaps, but that seems suitable, no?

And this fanciful name has spread itself onto businesses as well. A recently established restaurant just a bit farther east on Front Street is called the Berczy Tavern; a building just west of the park, long known as the EDS Building and then the Altus Group Building, is now branded Berczy Square.

So William Berczy finally has the recognition he always wanted – at least among those of us who, seeing the name on the park, undertake some dogged digging into fountains of knowledge. Perhaps eventually there will be a plaque about him, or a reproduction of one of his paintings…

Note: All photos except those otherwise credited are by me (James Harbeck). See more of my photos of Berczy Park on Flickr.